OrbNet AI Innovation Lab

The OrbNet AI Innovation Lab is our "virtual" innovation lab created as a strategic asset to OrboGraph, our clients, and prospective clients looking to evaluate the capabilities of our solutions.

Led by Avikam Baltsan, CTO of OrboGraph, in conjunction with data scientists and AI architects with expertise in data intelligence, analytics, scoring and computer vision/machine learning/deep learning, the innovation lab's primary goal is to formalize a process where Artificial Neural Network (ANN)-based products are developed with a faster time to market with optimal performance levels. Theses are the underlying technology incorporated into the OrboAnywhere -- OrbNet AI with Deep Learning Models for check recognition and OrbNet Forensic AI for On-Us and Deposit Fraud detection.

There are multiple benefits to formalizing an innovation lab:

- Defines the technology foundation: In this case, focusing on AI and deep learning.

- Reinforces our company charter: To solve difficult challenges in the financial and healthcare payments industries involving AI-based image processing and computer vision.

- Formalizes the development process and aligns with industry leaders: This methodology is one with which large organizations are familiar. See how these 31 companies have implemented an innovation lab concept.

- Demonstratable results: When blended with agile development, one can demonstrate the outcomes much faster compared to traditional development.

- Provides early access for POC: OrboGraph can facilitate a number of proof of concept (POC) scenarios depending on the needs of the business partners, financial institution, or healthcare RCM organization.

-

- Offline performance testing: Client delivers images to OrboGraph, who then execute detailed remote performance testing on the images. This option is popular for prospective Anywhere Recognition, Anywhere Fraud, and Access EOB Conversion clients.

- On-premise software testing: Client installs OrboGraph software package, then performs internal testing variations based on OrboGraph recommendation and client-specific workflow requirements. This option is only relevant for OrboAnywhere prospective clients

OrbNet AI Technology

The principles, nomenclature, and underlying technology within OrbNet AI and OrbNet Forensic AI leverage AI with deep learning models, not based on recognition algorithms nor reliant upon OCR toolkits.

The OrbNet AI innovation lab develop team continuously research and develop a wide range of deep learning technologies delivered in highly optimized models for check recognition and fraud detection.

Components and platforms incorporated into the platform include Convolutional Neural Networks (CNN), Recurrent Neural Networks (RNN), Gradient Boosted Decision Trees, Gen-II text classification models, TensorFlow RT framework, and CUDA drivers for optimal inference on NVIDIA and other GPUs.

Enhancing Fraud Detection Capabilities

Check fraud has risen dramatically over the past few years -- with VALID Systems making a bold prediction of $35 billion in check fraud losses in 2025.

Artificial Intelligence and deep learning technologies have paved the way to fight the fraudsters and protect your customers -- enabling banks and financial institutions to detect fraudulent attacks and activities before their customers funds are accessed.

One of the most prominent use cases for AI and deep learning for payments fraud is analyzing the past behavior of accounts, training deep learning models with thousands to millions of transactional history. When a new transaction occurs, the technology can compare this transaction in milliseconds to past transactions to spot suspicious activities. With each new transaction, the artificial intelligence continues to learn.

AI and deep learning is applied to both On-Us and Deposit Fraud detection -- with image forensic AI and transaction analytics applied to on-us and inclearing items and a combination of image forensic AI, transactional and behavioral analytics, and consortium data for deposit fraud. Click the links below to learn more.

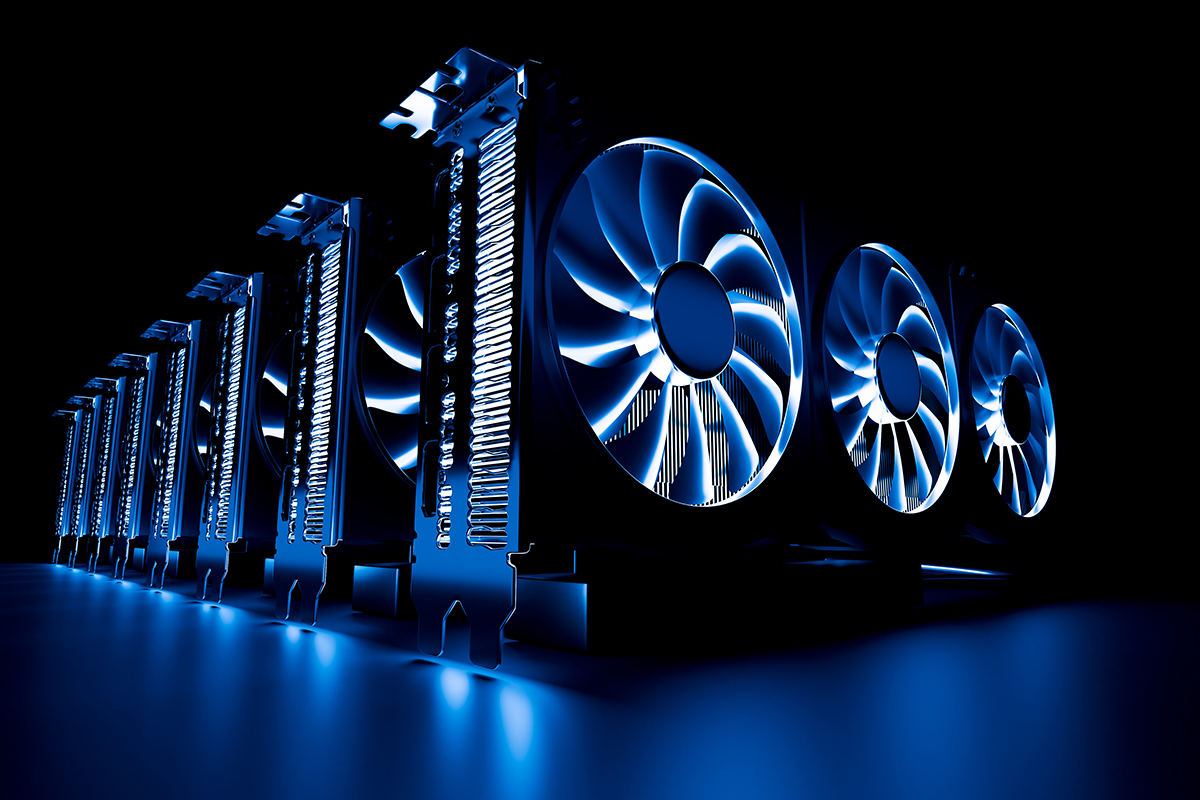

Graphical Processing Units

Unleashing the power of OrbNet AI is contingent upon accessibility to significant processor capacity. Graphical Processing Units (GPU) have become the defacto standard for deep learning models.

In computing, floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance. This is useful in fields of scientific computations that require floating-point calculations. As an example, one of the OrbNet AI models can consume between 1 billion (1,000,000,000) FLOPS to 10 billion (10,000,000,000) FLOPS per CAR field.

This may seem like an enormous overhead to the layperson, but the NVIDIA Tesla V100 (one of their strongest GPU families) performs 14 teraFLOPS (14,000,000,000,000) single precision.

The result is that these individual calculations by field can be easily scaled with today's hardware.

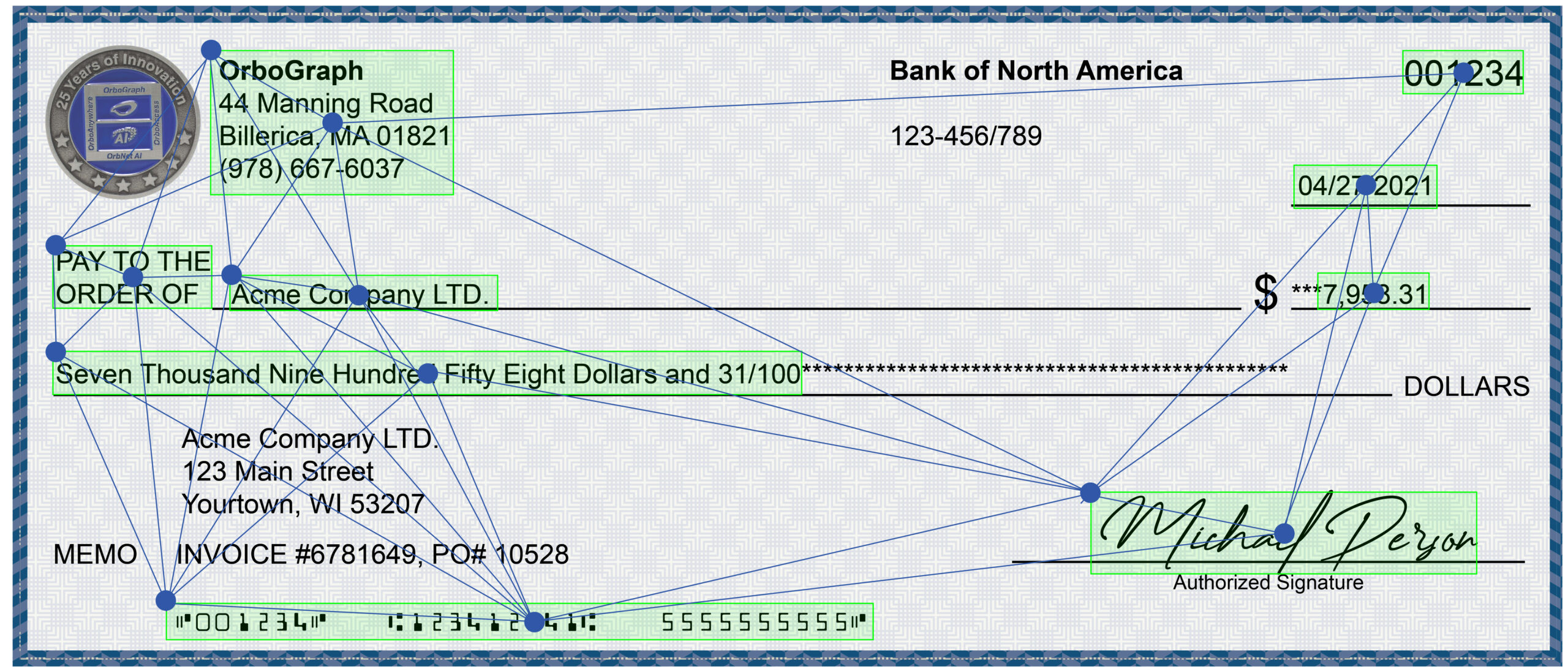

OrbNet AI Process Flow

The internal process for recognizing fields on checks and full-page documents is dramatically different from traditional OCR, ICR, and CAR/LAR recognition techniques. A benefit of this process is that with greater processing capacity, the recognition process can be collapsed into the following stages:

- Field Detection: Referred to as "object detection" in AI nomenclature, this deep learning model will identify the ROI (Region Of Interest), locking onto the coordinates of the field. Multiple fields can be detected simultaneously rather than serially.

- Text Classification: Deep learning models will identify and deliver a classification specific to the fields identified on a document. We use targeted techniques applied to checks, money orders, and other financial documents. OrbNet AI continues to be enhanced with other document types as well.

- Interpretation: The output value(s) and scores represent the probability of success, normalized via several internally deployed decision tree models for optimal performance.

Looking Forward

OrbNet AI and OrbNet Forensic AI is the future for check recognition and check fraud detection. For more specific application benefits, please visit the OrboAnywhere webpage.

Visit the platform modernization page to review how the healthcare and financial industries are modernizing their infrastructure.